Your website can go from fully functional to completely unreachable in seconds—and the culprit might not be your server, application, or network. It could be DNS. I've seen teams scramble for hours trying to diagnose a "mysterious" outage, only to discover their DNS provider was experiencing regional failures that weren't visible from their monitoring location.

DNS failures are particularly insidious because they can affect specific geographic regions while appearing completely normal from your primary monitoring setup. This makes DNS monitoring not just important—it's absolutely critical for maintaining website availability.

What is DNS Monitoring and Why It Matters

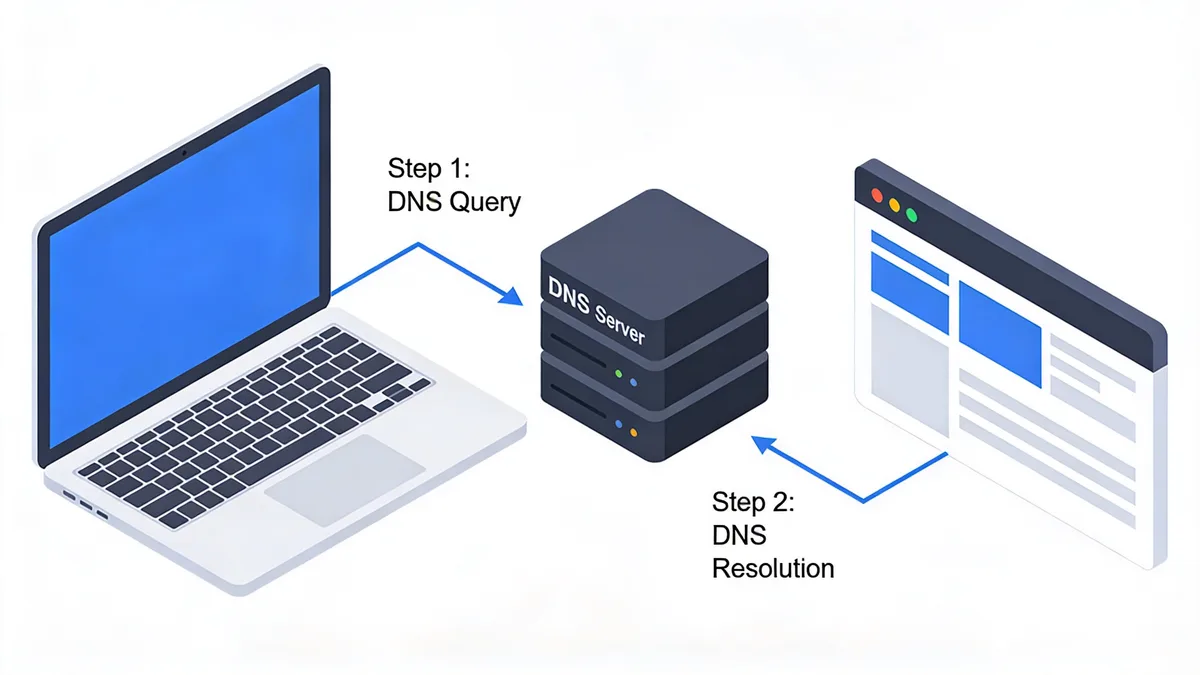

DNS monitoring is the practice of continuously checking that your domain names resolve correctly and quickly from multiple locations worldwide. It validates that when someone types your website address, they get the right IP address in a reasonable amount of time.

Think of DNS as the internet's phonebook. When users visit your site, their browser first asks DNS servers "What's the IP address for example.com?" If this lookup fails or takes too long, your website becomes unreachable regardless of how well your servers are running.

How DNS Failures Cause Website Outages

DNS failures manifest in several ways that directly impact user experience. The most obvious is complete resolution failure—when DNS queries return no results, making your website completely inaccessible.

More subtle but equally problematic are slow DNS responses. Research by IBM and Catchpoint Systems found that the global average DNS response time is 263 milliseconds. When DNS lookups exceed this significantly, users experience slow page loads or timeouts.

Regional DNS failures are particularly tricky to detect. Your monitoring might show everything working perfectly from one location while users in Asia or Europe can't reach your site at all.

The Business Impact of DNS Downtime

The financial impact of DNS-related outages can be severe. Research indicates that a 0.1-second improvement in application response times can lead to a 10% increase in sales growth, highlighting how DNS performance directly affects revenue.

In my experience working with e-commerce clients, I've seen DNS outages during peak traffic periods cost thousands of dollars in lost sales within minutes. The damage extends beyond immediate revenue loss—customer trust erodes quickly when users can't reliably access your services.

DNS attacks are also becoming more frequent and sophisticated. 350 DNS-layer attacks targeted financial institutions in just one month, according to recent threat intelligence data. These attacks can take down websites without touching the underlying infrastructure.

DNS vs Other Monitoring Types

DNS monitoring differs fundamentally from traditional uptime monitoring. Standard HTTP checks verify that your web server responds, but they don't validate the DNS resolution that happens before the HTTP request.

You might have perfect server uptime while experiencing DNS failures that make your site unreachable. This is why comprehensive monitoring strategies include both DNS validation and traditional endpoint monitoring.

DNS monitoring also provides earlier warning signs than application-level monitoring. DNS performance degradation often precedes more serious infrastructure issues, giving you time to investigate and resolve problems proactively.

Common DNS Failure Scenarios That Cause Outages

Understanding common DNS failure patterns helps you design better monitoring and response strategies. In my six years managing infrastructure, I've encountered each of these scenarios multiple times.

Authoritative Server Failures

Your authoritative DNS servers are the ultimate source of truth for your domain's records. When these servers fail or become unreachable, DNS resolution stops working entirely.

I've seen authoritative server failures caused by hardware issues, network partitions, and even simple configuration mistakes during routine maintenance. The impact can be global if you're using a single DNS provider without redundancy.

Monitoring should check that your authoritative servers respond correctly from multiple locations. Don't assume that because one server responds, all of them are working properly.

DDoS Attacks on DNS Infrastructure

DDoS attacks targeting DNS infrastructure can overwhelm your DNS providers' capacity to respond to legitimate queries. These attacks are particularly effective because they can make websites unreachable without directly targeting the web servers.

Premium managed DNS services are 35% faster than self-hosted solutions partly because they have better DDoS protection infrastructure. Providers like Cloudflare process 4.3 trillion DNS queries per day, giving them significant capacity to absorb attack traffic.

Modern DNS monitoring should track query success rates and response times during traffic spikes. Unusual patterns often indicate ongoing attacks before they completely overwhelm your DNS infrastructure.

Misconfigured DNS Records

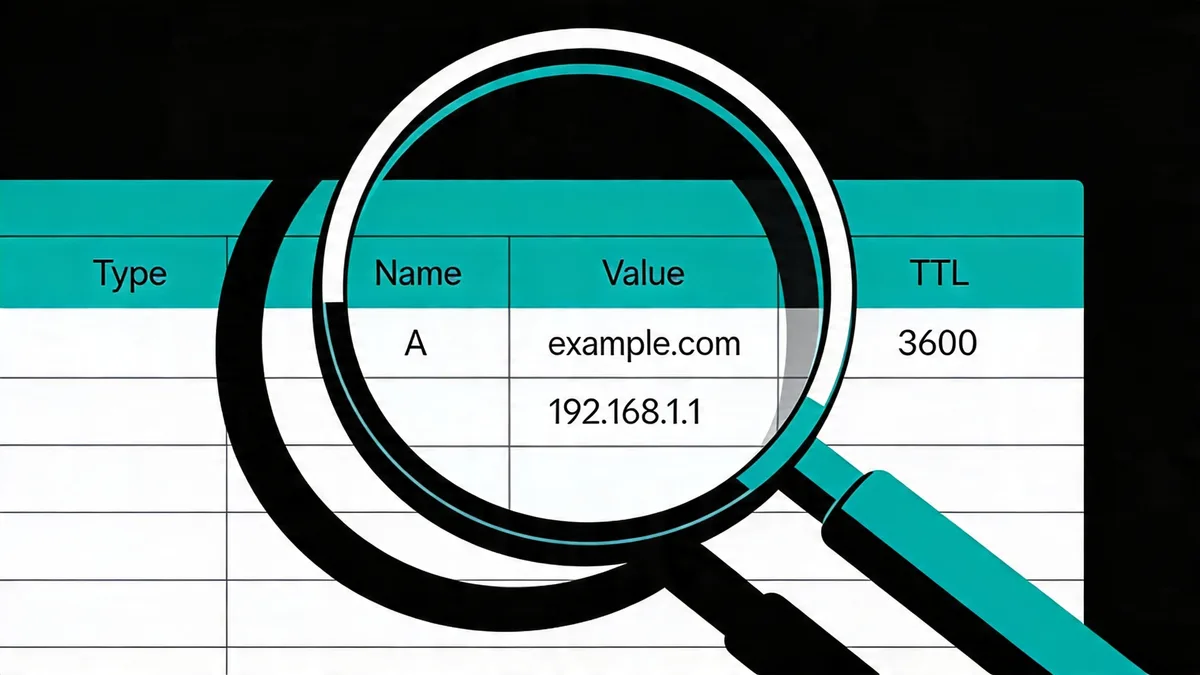

Configuration errors in DNS records are surprisingly common and can cause partial or complete outages. I've seen teams accidentally delete A records, misconfigure CNAME chains, or set TTL values that create caching problems.

The most dangerous misconfigurations are those that work initially but cause problems later. For example, setting extremely low TTL values can overload your DNS servers during traffic spikes, while very high TTL values prevent quick recovery from outages.

Monitoring should validate that DNS records return expected values, not just that queries succeed. A query that returns the wrong IP address is just as problematic as a failed query.

Regional DNS Degradation

Network congestion and routing issues can cause DNS performance to degrade in specific geographic regions while appearing normal elsewhere. This creates a particularly challenging debugging scenario.

Anycast routing problems are a common cause of regional DNS issues. When traffic gets routed to distant or overloaded DNS servers, users in affected regions experience slow resolution or timeouts.

Multi-location monitoring is essential for detecting these issues. I recommend monitoring from at least 3-5 geographically diverse locations to catch regional problems early.

Essential DNS Monitoring Metrics to Track

Effective DNS monitoring requires tracking the right metrics to detect problems before they impact users. Focus on these key measurements to build comprehensive visibility into your DNS performance.

Response Time and Latency Percentiles

DNS response time is the most fundamental metric to track. Monitor p95 and p99 latency percentiles rather than just average response times to catch performance degradation that affects a subset of users.

Average response times can be misleading because they smooth out intermittent issues. A small percentage of very slow queries can indicate developing problems that averages won't reveal.

Set baseline performance expectations based on your DNS provider's typical performance. Premium managed services typically deliver response times 16-39% faster than the global average, so adjust your thresholds accordingly.

Resolution Accuracy Validation

Monitoring that DNS queries succeed isn't enough—you need to verify they return correct results. Track whether A records resolve to expected IP addresses and CNAME records point to the right destinations.

This validation is crucial for detecting DNS hijacking attempts or configuration drift. I've seen cases where DNS records were subtly modified to redirect traffic to malicious servers while queries technically "succeeded."

Validate correct record resolution from multiple locations to ensure consistency across different DNS resolvers and geographic regions. Inconsistent results often indicate propagation issues or regional configuration problems.

Availability and Reliability Metrics

Track DNS query success rates across regions to identify patterns in failures. A 95% success rate might seem acceptable until you realize the 5% failure rate affects your largest customer segment.

Monitor DNSSEC validation status if you've implemented DNS Security Extensions. DNSSEC failures can cause resolution problems for security-conscious users and applications.

Reliability metrics should include both immediate failures and timeout scenarios. Long-running queries that eventually timeout create poor user experiences even if they technically succeed.

Setting Up Effective DNS Monitoring

Proper DNS monitoring setup requires careful planning of monitoring locations, record selection, and alerting strategies. Here's how to build monitoring that catches problems before users notice them.

Multi-Location Monitoring Setup

Deploy checks from multiple geographic locations to detect regional DNS issues that single-location monitoring would miss. I recommend monitoring from at least 3-5 locations spanning different continents.

Choose monitoring locations that represent your user base. If most of your traffic comes from North America and Europe, ensure you have monitoring coverage in both regions.

Consider using different DNS resolvers in each location to test resolution consistency. Some DNS issues only affect specific resolver chains or geographic routing paths.

Choosing the Right DNS Records to Monitor

Monitor critical A, AAAA, CNAME, and MX records that directly impact user access to your services. Don't try to monitor every DNS record—focus on those that would cause outages if they failed.

Start with your primary domain's A record and any critical subdomains. Add AAAA records if you serve IPv6 traffic and CNAME records for important aliases like www or api subdomains.

Include MX records in your monitoring if email delivery is business-critical. DNS issues affecting mail exchange records can disrupt email services even when your website works fine.

Alert Configuration Best Practices

Set up escalating alerts for different failure types rather than treating all DNS issues equally. Complete resolution failures warrant immediate alerts, while performance degradation might trigger warnings first.

Configure response time thresholds based on baseline performance rather than arbitrary values. If your typical DNS response time is 150ms, alert when it exceeds 300ms rather than using generic thresholds.

Implement alert suppression to prevent notification storms during widespread outages. Multiple DNS monitoring locations failing simultaneously often indicates a provider-level issue rather than multiple independent problems.

DNS Monitoring Tools and Platform Comparison

The DNS monitoring landscape includes specialized tools, enterprise platforms, and integrated monitoring solutions. Each approach has strengths depending on your requirements and existing infrastructure.

Enterprise vs Mid-Market Solutions

Enterprise tools like ThousandEyes offer deep diagnostics that correlate DNS failures across the entire delivery path. These platforms excel at pinpointing exactly where in the resolution chain problems occur, but they're typically overpowered for smaller organizations.

Catchpoint provides similar enterprise-grade capabilities with strong synthetic monitoring features. Both platforms are valuable when you need detailed forensics and have complex, multi-provider DNS setups.

Mid-market solutions like Dotcom-Monitor excel at DNS-focused monitoring with highly configurable checks and multi-location coverage. These tools provide the essential features most organizations need without enterprise complexity.

Cloud-Based vs Self-Hosted Options

Site24x7 integrates DNS into broader observability platforms, making it efficient for teams already using comprehensive monitoring stacks. This integration allows DNS alerts to flow into existing on-call workflows.

Self-hosted options like PRTG work well for organizations with significant on-premises infrastructure. These tools treat DNS monitoring like other network telemetry and integrate well with existing network management workflows.

UptimeRobot provides cost-effective basic monitoring that's suitable for smaller sites or supplementary monitoring. While less feature-rich than specialized tools, it offers reliable DNS change detection at low cost.

Integration Capabilities

Datadog allows DNS monitoring results to integrate with unified alert policies and incident response workflows. This integration is particularly valuable for teams prioritizing consolidated monitoring over specialized DNS tools.

SolarWinds offers real-time DNS monitoring with strong integration into network monitoring workflows. It's particularly effective for organizations already using SolarWinds for infrastructure monitoring.

Consider how DNS monitoring data will integrate with your existing tools for uptime monitoring and incident response. Unified alerting often provides more value than best-of-breed point solutions.

Troubleshooting DNS Issues and Performance Problems

When DNS monitoring alerts fire, systematic troubleshooting helps identify root causes quickly. I've developed these approaches through years of debugging DNS issues at 3 AM.

Diagnosing Slow DNS Resolution

Use dig and nslookup for manual DNS testing to validate monitoring alerts and gather additional diagnostic information. These command-line tools provide detailed timing and response data that monitoring dashboards might not show.

Compare response times across different resolvers to determine if slowness is resolver-specific or affects your authoritative servers. Query your domain using multiple public DNS services like 8.8.8.8, 1.1.1.1, and 9.9.9.9.

Check authoritative server performance by querying your DNS provider's name servers directly. Slow authoritative responses affect all downstream resolvers and indicate provider-level issues.

Identifying Regional DNS Problems

Regional DNS issues require testing from multiple geographic locations to isolate affected areas. Use online DNS testing tools that query from different countries to map the scope of problems.

Validate DNS propagation across regions when you've recently made DNS changes. Propagation delays can create temporary regional inconsistencies that monitoring might interpret as failures.

Check for anycast routing problems by comparing response times and server identities from different locations. Significant variations might indicate suboptimal traffic routing.

Resolving Intermittent DNS Failures

Intermittent failures are often the most challenging to debug because they don't consistently reproduce. Monitor DNS performance over longer time windows to identify patterns in failure timing.

Network congestion during peak hours can cause intermittent DNS issues. Compare failure rates during different times of day to identify congestion-related patterns.

TTL misconfigurations can create intermittent problems when cached records expire and refresh with different values. Review your TTL settings and ensure they align with your change management processes.

DNS Security Monitoring and Threat Detection

DNS security monitoring protects against attacks that can redirect traffic or make services unavailable. Modern threats require monitoring beyond basic availability and performance metrics.

DNS Hijacking Prevention

Monitor for unauthorized DNS record changes by tracking modifications to critical records like A, NS, and MX records. Set up alerts for any changes to these records outside of planned maintenance windows.

Implement monitoring that compares current DNS responses against expected values. Unexpected IP addresses in A record responses often indicate hijacking attempts or configuration drift.

Consider using DNS monitoring tools that maintain historical records of your DNS configurations. This historical data helps identify when unauthorized changes occurred and what was modified.

Monitoring for Malicious DNS Changes

500,000 new domains are analyzed daily for threats, highlighting the scale of malicious DNS activity on the internet. While most threats target other domains, monitoring your own DNS for suspicious changes is crucial.

Track DNS query patterns for anomaly detection. Unusual spikes in queries for specific subdomains might indicate reconnaissance activity or attempted attacks.

Monitor for new DNS records that you didn't authorize. Attackers sometimes add subdomains to legitimate domains to host malicious content or phishing sites.

DNSSEC Validation

Implement DNSSEC validation checks to ensure your DNS responses haven't been tampered with in transit. DNSSEC provides cryptographic verification that DNS responses are authentic.

Monitor DNSSEC chain validation to ensure your entire signing chain remains intact. Broken DNSSEC chains can cause resolution failures for security-conscious applications and users.

Track DNSSEC key rollover events to ensure they complete successfully. Failed key rollovers can cause validation failures that make your site inaccessible to DNSSEC-validating resolvers.

Best Practices for DNS Monitoring in 2026

DNS monitoring continues evolving as networks become more complex and security threats more sophisticated. These practices reflect current best practices and emerging trends.

Proactive vs Reactive Monitoring

Focus on pattern recognition over real-time alerts to reduce noise while catching developing issues early. Modern monitoring platforms use higher resolution data to detect transient spikes and gradual degradation.

Implement trend analysis that identifies performance degradation before it reaches critical thresholds. Gradual increases in DNS response times often predict infrastructure issues before they cause outages.

Use predictive monitoring that learns normal DNS performance patterns and alerts when behavior deviates significantly from established baselines.

Integration with Incident Response

Integrate DNS monitoring with on-call workflows to ensure DNS issues receive appropriate priority during incident response. DNS problems can cascade into application-level issues that are harder to diagnose.

Create runbooks that guide responders through DNS troubleshooting procedures. Include steps for validating DNS monitoring alerts and escalating to DNS providers when necessary.

Implement automated remediation for common DNS issues where possible. For example, automatically failing over to secondary DNS providers when primary providers show degraded performance.

Performance Optimization Strategies

Use higher resolution data to detect transient issues that traditional monitoring might miss. Short-lived DNS problems can significantly impact user experience even if overall availability remains high.

Implement redundant DNS providers for failover to reduce the impact of provider-specific outages. Configure monitoring to validate failover functionality and alert if backup providers aren't responding correctly.

Consider using DNS monitoring tools that provide detailed performance analytics to identify optimization opportunities. Understanding your DNS performance patterns helps guide provider selection and configuration decisions.

Regular DNS performance reviews help identify trends and optimization opportunities. Compare your DNS performance against industry benchmarks and consider provider changes if performance consistently lags expectations.

Frequently Asked Questions

What DNS response time should I target for good performance?

The global average DNS response time is 263 milliseconds. Premium managed DNS services typically deliver 16-39% faster responses, so aim for under 200ms for optimal user experience.

How often should I check my DNS from different locations?

Monitor DNS resolution from at least 3-5 geographic locations every 1-5 minutes. This helps detect regional issues that might not be visible from a single monitoring location.

Should I use self-hosted DNS or a managed service?

Managed DNS services are recommended for most organizations as they're 35% faster than self-hosted solutions and require less operational overhead. Consider premium providers like Cloudflare for better performance.

What DNS records are most critical to monitor?

Monitor your primary A records, AAAA records for IPv6, CNAME records for subdomains, and MX records for email. Also track NS records to ensure your authoritative servers are responding correctly.

How do I detect DNS security threats?

Monitor for unauthorized changes to DNS records, implement DNSSEC validation, and track unusual query patterns. Set up alerts for any modifications to critical DNS records like A, NS, or MX records.

What causes intermittent DNS resolution issues?

Common causes include network congestion, misconfigured TTL values, resolver-specific failures, and DDoS attacks. Use multi-location monitoring to identify if issues are regional or global.

Related: DNS Monitoring Best Practices (2026) — prevent outages with proactive DNS monitoring strategies.

Start Monitoring Your Website for Free

Get 6-layer monitoring, uptime, performance, SSL, DNS, visual, and content checks, with instant alerts when something goes wrong.

Get Started